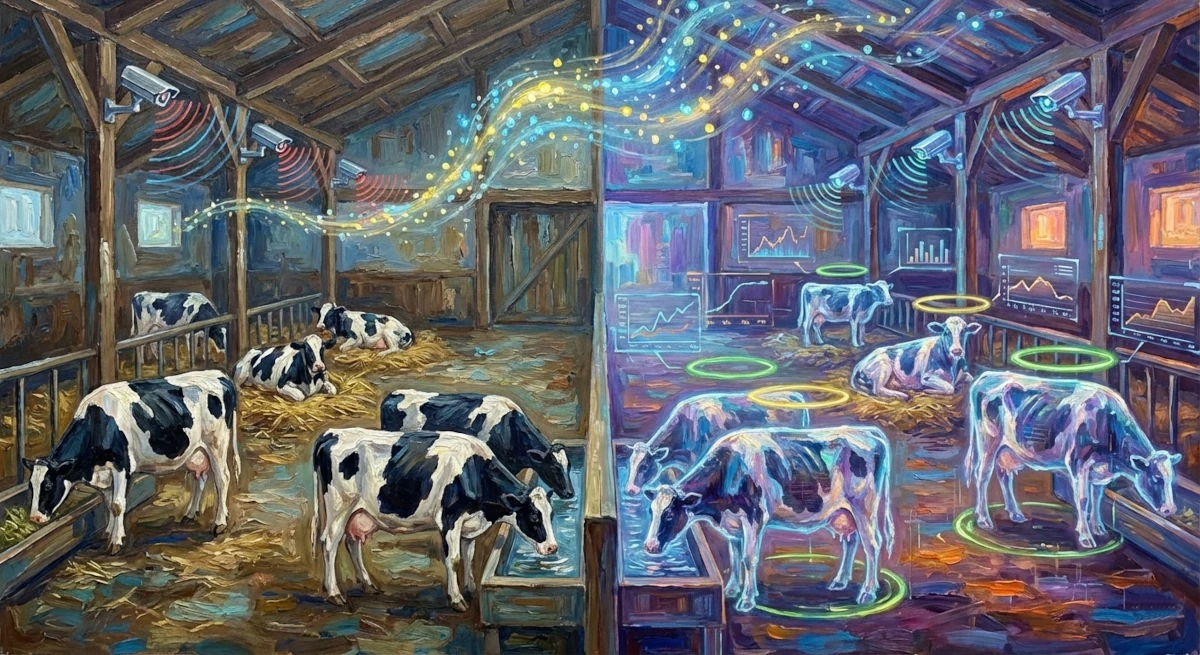

Picture this: Every cow on a dairy farm has its own personal health monitor, nutrition coach, and wellness tracker – all working 24/7 without any devices attached to the animal. This isn’t a futuristic fantasy; it’s happening right now thanks to artificial intelligence and a concept called “digital twins.”

Understanding Digital Twins: A Virtual Mirror for Every Cow

A digital twin is essentially a virtual replica of something in the physical world. In dairy farming, it’s a computer-generated version of each cow that mirrors the real animal’s behavior, health status, and nutritional needs in real-time.

Think of it like the relationship between a professional athlete and the detailed performance data their coaches track. Coaches monitor everything – heart rate, movement patterns, fatigue levels, nutrition – to optimize performance and prevent injuries. A digital twin does the same thing for dairy cows, creating a detailed virtual profile that helps farmers provide better, more individualized care.

The results speak for themselves. Research shows that digital twin technology can:

- Boost feed conversion efficiency by 15-20%, meaning cows convert food into milk more effectively

- Cut veterinary interventions by 25-30% through early detection of health problems

- Reduce greenhouse gas emissions per unit of milk by up to 25%

Why Every Move Matters: The Science of Cow Behavior

To understand why this technology is so revolutionary, you first need to understand why watching cows so closely matters. Unlike humans, cows can’t tell you when they’re feeling unwell or uncomfortable. Instead, they communicate through their behavior – and if you know what to look for, these behaviors reveal everything.

Feeding behavior tells farmers about a cow’s energy balance and whether its nutritional needs are being met. Changes in feeding patterns can be the first sign that something is wrong, often appearing days before visible symptoms of illness.

Lying behavior is a direct indicator of comfort and well-being. Healthy cows lie down for 10-14 hours per day. If a cow isn’t lying down enough, it could signal problems ranging from uncomfortable bedding to painful lameness. Too much lying down can also indicate illness or metabolic issues.

The challenge has always been monitoring these behaviors accurately and continuously for every animal in the herd.

Why Old Methods Don’t Cut It Anymore

Traditional approaches to monitoring cow behavior simply can’t keep up with the demands of modern dairy farming:

Human Observation: Having farm workers watch and record animal behavior seems straightforward, but it’s incredibly impractical. It requires constant labor, it’s prohibitively expensive for large operations, and it’s inherently subjective. Studies show that when two different people observe the same cow, they disagree on what they’re seeing 15-25% of the time. You also can’t have someone watching every cow around the clock.

Wearable Sensors: Devices like electronic collars or leg bands were developed to solve the human observation problem, but they created new issues. Between 5-15% of these devices get lost or fall off. They need new batteries every few months. They can drift out of calibration, giving inaccurate readings. Perhaps most problematically, the devices themselves can change how cows behave – like trying to study someone’s natural walking pattern while they’re wearing uncomfortable shoes.

Enter Computer Vision: This is where AI-powered cameras offer a game-changing alternative. Cameras don’t need batteries, don’t fall off, don’t alter behavior, and can monitor dozens of animals simultaneously. A single camera system can watch an entire section of barn continuously, day and night, without any human intervention.

Building the System: From Camera to Digital Cow

Creating a functional digital twin requires transforming raw video footage into actionable insights. Here’s how the five-stage process works:

Stage 1: Constant Surveillance

High-definition cameras are strategically placed throughout the barn to capture every angle and every animal. These aren’t ordinary cameras – they use infrared technology to see clearly even in complete darkness, ensuring continuous monitoring during the barn’s nighttime hours.

In the groundbreaking study conducted at Dalhousie University, researchers installed seven high-resolution cameras that recorded at 1920×1080 resolution and 25-30 frames per second, monitoring a commercial herd of approximately 80 Holstein dairy cows.

Stage 2: Finding and Following – Detection and Tracking

Raw video footage is useless without context. The AI needs to know what it’s looking at and which cow is which. This happens in two phases:

Phase One – Detection: An AI model called YOLOv11 (which stands for “You Only Look Once”) analyzes each video frame and identifies every cow in view. It draws a virtual box around each animal, pinpointing its location in the barn. The model used in the study was trained on over 2,300 images and achieved a stunning 99.4% accuracy rate in detecting cows.

Phase Two – Tracking: Here’s where it gets clever. Another algorithm called ByteTrack takes those detected cows and maintains their identity across time. It’s like a sophisticated game of “follow the cow,” ensuring that Cow #47 in one frame is correctly identified as the same Cow #47 in the next frame, even if she moves behind a post, another cow passes in front of her, or she walks to a different part of the barn.

This persistent identity tracking is crucial because the system needs to build a behavioral profile for each individual animal over time, not just identify that “a cow” is doing something.

Stage 3: Understanding Behavior – The AI Brain

Now comes the really sophisticated part: figuring out what each cow is actually doing. The researchers tested two cutting-edge deep learning architectures:

The SlowFast Network uses a dual-pathway approach inspired by how human vision works. One pathway processes information slowly to understand the big picture – the cow’s overall posture and position. The other pathway processes information quickly to catch rapid movements – like the motion of a cow’s head while eating or the jaw movement during rumination.

The TimeSformer Model takes a different approach using transformer architecture – the same technology behind breakthroughs like ChatGPT. Instead of relying on traditional methods, it uses “self-attention” mechanisms that can look at the entire video clip holistically, understanding relationships between different moments in time and different regions of space simultaneously.

After rigorous testing, TimeSformer emerged as the winner. It achieved 85.0% overall accuracy compared to SlowFast’s 82.3% – a statistically significant improvement. More impressively, it could process 22.6 frames per second on high-end hardware, proving it could work in real-time.

The Seven Behaviors: A Comprehensive Picture

The AI system classifies cow behavior into seven distinct categories:

- Standing and feeding

- Lying and feeding

- Drinking

- Lying (resting)

- Lying and ruminating

- Standing and ruminating

- Standing (inactive)

This granularity is critical. A simpler system might just report “the cow is standing,” but that tells you almost nothing. Is she actively eating? Is she ruminating, which indicates good digestive health? Or is she just standing idle? Each of these states has different metabolic, nutritional, and health implications.

How the AI “Sees” Behavior

To create their training dataset, researchers meticulously annotated 4,964 ten-second video clips, with two trained experts labeling each clip to ensure accuracy. Their agreement rate exceeded 95%, confirming the reliability of the labels.

But 4,964 clips weren’t enough to train a robust AI system, especially when some behaviors (like drinking) are naturally rare. So the researchers used data augmentation – essentially creating variations of existing clips by adjusting brightness, rotating angles, cropping differently, and applying other transformations. This expanded the dataset to 9,600 clips and ensured rare behaviors were adequately represented.

Here’s something fascinating: The researchers used attention visualization to understand what the AI actually looks at when making decisions. The results confirmed the model was genuinely intelligent, not just pattern-matching on irrelevant features:

- When identifying feeding or drinking, the model focused on the cow’s head and muzzle

- When distinguishing lying from standing, it focused on the torso and limbs

- The attention patterns matched exactly what a knowledgeable human observer would focus on

This biological validity is crucial for building trust in the system’s decisions.

Stage 4: From Behavior to Biology – Nutritional Modeling

Once the AI has classified behaviors and timed how long each cow spends in each state, that information gets organized into clean, structured data files – think of them as detailed spreadsheets with columns for cow ID, behavior type, start time, end time, duration, and confidence level.

This structured data then feeds into established nutritional models based on NRC (National Research Council) equations. These mathematical models can calculate:

- How much food each cow is consuming

- How much energy each cow is expending

- Whether each cow’s nutritional needs are being met

- How feed rations should be adjusted for optimal health and milk production

This transforms behavioral observation into actionable nutritional guidance for each individual animal.

Stage 5: Bringing It All Together – 3D Visualization

The final piece is making all this data accessible and understandable for farm managers. The system uses Unity – a professional game development platform – to create a 3D virtual barn where each real cow has a corresponding avatar.

Farm managers can look at a dashboard and instantly see:

- Which cows are eating, resting, or ruminating

- How long each cow has been in its current state

- Any unusual patterns or deviations from normal behavior

- Historical trends for each animal

It’s like SimCity or The Sims, but instead of fictional characters, each avatar represents a real cow whose health and welfare depend on the insights this system provides.

Real-World Performance: How Well Does It Actually Work?

The system isn’t just theoretically impressive – it performs remarkably well in practice.

Classification Accuracy: The TimeSformer model achieved strong performance across all behavior types:

- Feeding & Standing: 94.7% accuracy (F1-score)

- Drinking: 90.1% accuracy

- Lying: 87.8% accuracy

- The overall macro-F1 score of 0.84 indicates consistently strong performance across all categories

Processing Speed: In a real-world test with 16 cows being monitored simultaneously, the entire pipeline – detection, tracking, and classification – achieved an average processing time of 180 milliseconds per frame. That’s 5.5 frames per second per cow, which is more than adequate for continuous monitoring.

Scalability: These performance metrics prove the system can handle commercial-scale operations, not just small research herds.

Where the AI Still Struggles: Understanding the Limitations

Every technology has limitations, and being honest about them is important for future improvements.

The most common mistakes the AI makes are actually quite revealing. The model most frequently confuses:

- Lying vs. Lying and Ruminating (12-15% confusion rate)

- Standing vs. Standing and Ruminating

Notice the pattern? The confusion occurs between behaviors that look nearly identical except for one subtle difference: jaw movement during rumination. Even for experienced human observers, distinguishing a cow that’s quietly lying down from one that’s lying down and chewing cud requires close attention to subtle head movements. The AI faces the same challenge.

Environmental Factors also impact performance. The model’s accuracy drops 8-12% when operating on infrared night-vision footage compared to daytime video. Barn structures that partially block the camera’s view of cows also create challenges.

These aren’t fundamental flaws – they’re engineering challenges with clear solutions on the horizon.

The Three Revolutionary Benefits for Farming

This technology fundamentally changes how dairy farming works in three crucial ways:

1. Precision Agriculture: Individual Care at Scale

Traditional farming treats the herd as a single unit – everyone gets the same feed, the same schedule, the same environment. It’s like a school where every student gets identical lessons regardless of their needs.

Digital twins enable truly individualized care. If Cow #23 is spending less time eating but more time lying down, her feed ration can be adjusted specifically for her. If Cow #61 is consistently active and productive, her nutrition program can be optimized for her metabolism. Each animal becomes an individual patient rather than a member of a generic group.

2. Predictive Health Monitoring: Catching Problems Early

Perhaps the most powerful benefit is early disease detection. By establishing a behavioral baseline for each cow, the system can automatically flag deviations that might indicate illness.

A cow that suddenly reduces her feeding time by 20% might be developing digestive issues. One that’s spending significantly less time lying down could be experiencing pain from an injury or infection. These changes often appear days before visible symptoms like reduced milk production or obvious signs of distress.

Early intervention – treating a problem when it first starts rather than waiting until it’s severe – is better for the cow (less suffering, faster recovery), better for the farmer (lower treatment costs, less lost productivity), and better for food safety (less need for antibiotics and medications).

3. Objective, Evidence-Based Decision Making

Farming has traditionally relied heavily on experience, intuition, and observation – all valuable, but also subjective and limited. Digital twins provide hard data: precise measurements, statistical trends, predictive analytics.

This empowers farmers to make better decisions backed by evidence. Should we change the bedding material? The lying-time data will show if it’s helping. Is the new feed formulation working? Feeding duration and rumination patterns will reveal the answer. Is this cow ready to be dried off before calving? Her behavioral trends can inform that decision.

It’s not about replacing farmer expertise – it’s about augmenting it with tools that provide insights no human could gather alone.

Looking Ahead: The Next Generation of Technology

The researchers identify several exciting directions for future development:

Multi-Modal Sensing: Combining video with other data sources like acoustic sensors (to hear chewing and rumination sounds) or thermal cameras (to detect temperature changes indicating fever or inflammation) could push accuracy even higher, especially in challenging conditions.

Edge Computing: Currently, the system requires powerful computer hardware. Future versions will use optimized “lightweight” models that can run on affordable on-farm computers, making the technology accessible to more farmers.

Federated Learning: This approach would allow AI models to learn from data across multiple farms without those farms having to share their actual video footage – preserving privacy while enabling models to become smarter through broader experience.

Improved Validation: Future studies will use even more rigorous testing methods, ensuring that models are tested on completely different cows than they were trained on, preventing any potential data leakage and confirming true generalization capability.

The Bigger Picture: Farming with Empathy and Efficiency

At its heart, this technology represents something profound: the ability to truly understand and respond to the needs of every individual animal under a farmer’s care.

For too long, the demands of scale have forced compromises. You simply cannot have humans watching every cow every moment, noticing every subtle change, optimizing every individual’s nutrition and environment. The economics don’t work. But the consequences are real – missed illnesses, suboptimal nutrition, preventable suffering, lost productivity.

Digital twins bridge that gap. They make it economically feasible to provide individualized, attentive care at commercial scale. They turn every dairy operation into a precision health and wellness system where each cow receives the attention she deserves.

This isn’t just about efficiency metrics, though those matter. It’s about building a more sustainable, more humane, more responsible approach to animal agriculture. It’s about farms that are simultaneously more productive and more ethical. It’s about using our most advanced technologies not to replace the farmer’s care and judgment, but to enhance it exponentially.

As this technology matures and spreads, we’re witnessing the emergence of a new paradigm in agriculture – one where data and compassion work hand in hand, where individual welfare and collective productivity reinforce rather than contradict each other, and where the farmer’s ancient role as caretaker is elevated and empowered by the most modern tools humanity has created.

The virtual cow isn’t replacing the real one. It’s giving her a voice, making her visible in ways she never has been before, and ensuring that her needs are heard, understood, and met. That’s the true promise of artificial intelligence in agriculture – not replacing human care, but making it more effective, more comprehensive, and more humane than ever before.

Source

Study: Video-Based Cattle Behavior Detection for Digital Twin Development in Precision Dairy Systems

Authors: Shreya Rao, Eduardo Garcia, Suresh Neethirajan (2025)

Read the full paper: https://www.biorxiv.org/content/10.1101/2025.11.13.688203v1

Leave a Reply